Artificial Intelligence Generates Humans’ Faces Based on Their Voices

by Meilan Solly Smithsonian.com

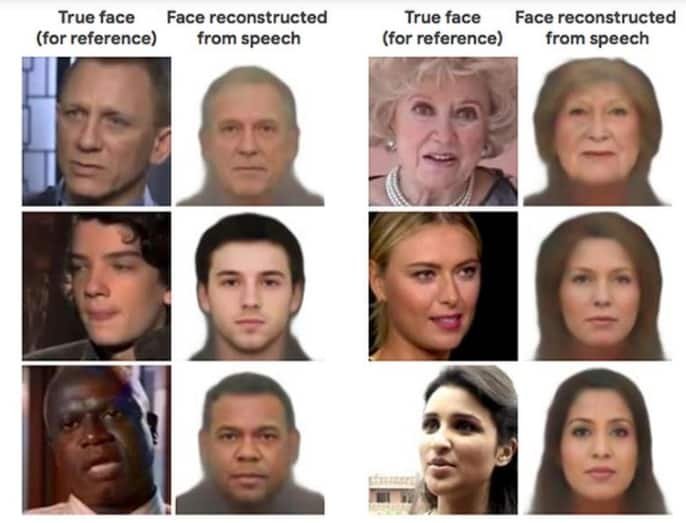

A new neural network developed by researchers from the Massachusetts Institute of Technology is capable of constructing a rough approximation of an individual’s face based solely on a snippet of their speech, a paper published in pre-print server arXiv reports.

The team trained the artificial intelligence tool—a machine learning algorithm programmed to “think” much like the human brain—with the help of millions of online clips capturing more than 100,000 different speakers. Dubbed Speech2Face, the neural network used this dataset to determine links between vocal cues and specific facial features; as the scientists write in the study, age, gender, the shape of one’s mouth, lip size, bone structure, language, accent, speed and pronunciation all factor into the mechanics of speech.

According to Gizmodo’s Melanie Ehrenkranz, Speech2Face draws on associations between appearance and speech to generate photorealistic renderings of front-facing individuals with neutral expressions. Although these images are too generic to identify as a specific person, the majority of them accurately pinpoint speakers’ gender, race and age.

Carol graduated from Riverside White Cross School of Nursing in Columbus, Ohio and received her diploma as a registered nurse. She attended Bowling Green State University where she received a Bachelor of Arts Degree in History and Literature. She attended the University of Toledo, College of Nursing, and received a Master’s of Nursing Science Degree as an Educator.

She has traveled extensively, is a photographer, and writes on medical issues. Carol has three children RJ, Katherine, and Stephen – one daughter-in-law; Katie – two granddaughters; Isabella Marianna and Zoe Olivia – and one grandson, Alexander Paul. She also shares her life with her husband Gordon Duff, many cats, and two rescues.

ATTENTION READERS

We See The World From All Sides and Want YOU To Be Fully InformedIn fact, intentional disinformation is a disgraceful scourge in media today. So to assuage any possible errant incorrect information posted herein, we strongly encourage you to seek corroboration from other non-VT sources before forming an educated opinion.

About VT - Policies & Disclosures - Comment Policy